In this post we configure Openswan and Windows 7 (or Vista) to bring up an IPsec + L2TP tunnel. One point of difference is that we focus on using certificates to facilitate secure IPsec connectivity. Put another way, we use RSA certs on phase1, rather than a pre-shared key. Configuring and maintaining this properly is not easy.

We will also look at how the Windows Vista and Windows 7

clients are configured with certificates (spoiler: it's the same way for both clients).

I'm a bit of an

Openswan fan. I don't exactly know why, since

strongSwan seems to be more feature rich. Maybe I defer to Openswan because it's easily installed through any Linux distro's package manager. Or perhaps because I started muddling around with it back in 2005. Anyway, a lot of what is written here will carry over to strongSwan.

Openswan seems to lack features that strongSwan offers; the ability to configure lifetime bytes (lifebytes) was one Openswan limitation I most recently bumped up against.

If there's anything in all this that isn't clear, then I'm happy to field comments or questions.

If you're wondering about the Oklahoma references later, it's because yesterday I watched the 1940 adaptation of The Grapes of Wrath.

Server Version

# cat /etc/debian_version

7.11

Files

/etc/ipsec.conf

/etc/ssl/openssl.cnf

/etc/xl2tpd/xl2tpd.conf

/etc/ppp/options.l2tpd.lns

/etc/ppp/chap-secrets

Openswan

Simply take the example configuration and make amendments in

a few places. Insert the contents of this file into the

/etc/ipsec.config file. You can just cat the file appending it to

ipsec.conf. For example:

cat l2tp-cert.conf >> /etc/ipsec.conf

The examples directory contains lots of handy files:

# ls -la /etc/ipsec.d/examples/

total 44

drwxr-xr-x 2 root root 4096 Mar 9 20:12 .

drwxr-xr-x 10 root root 4096 Mar 9 20:12 ..

-rw-r--r-- 1 root root 1659 May 27 2012 hub-spoke.conf

-rw-r--r-- 1 root root 1017 May 27 2012 ipv6.conf

-rw-r--r-- 1 root root 1736 May 27 2012 l2tp-cert.conf

-rw-r--r-- 1 root root 1825 May 27 2012 l2tp-psk.conf

-rw-r--r-- 1 root root 1156 May 27 2012 linux-linux.conf

-rw-r--r-- 1 root root 1580 May 27 2012 mast-l2tp-psk.conf

-rw-r--r-- 1 root root 235 May 27 2012 oe-exclude-dns.conf

-rw-r--r-- 1 root root 1694 May 27 2012 sysctl.conf

-rw-r--r-- 1 root root 664 May 27 2012 xauth.conf

The following example config has all the helpful comments snipped out for brevity:

# cat /etc/ipsec.conf

version 2.0 # conforms to second version of ipsec.conf specification

# basic configuration

config setup

#plutodebug="control parsing"

#plutodebug="all"

dumpdir=/var/run/pluto/

nat_traversal=yes

virtual_private=%v4:10.0.0.0/8,%v4:192.168.0.0/16,%v4:172.16.0.0/12,%v4:25.0.0.0/8,%v6:fd00::/8,%v6:fe80::/10

oe=off

protostack=auto

conn l2tp-X.509

authby=rsasig

pfs=no

auto=add

rekey=no

dpddelay=10

dpdtimeout=90

dpdaction=clear

ikelifetime=8h

keylife=1h

type=transport

# This is your server's IP:

left=192.168.168.24

leftid=%fromcert

leftrsasigkey=%cert

# You will create this certificate:

leftcert=/etc/ipsec.d/certs/ipsec-serverCert.pem

leftprotoport=17/1701

right=%any

rightca=%same

rightrsasigkey=%cert

rightprotoport=17/%any

rightsubnet=vhost:%priv,%no

conn passthrough-for-non-l2tp

type=passthrough

# This is your server's IP:

left=192.168.168.24

This is your server's gateway:

leftnexthop=192.168.168.1

right=0.0.0.0

rightsubnet=0.0.0.0/0

auto=route

Make sure that nat_traversal is on.

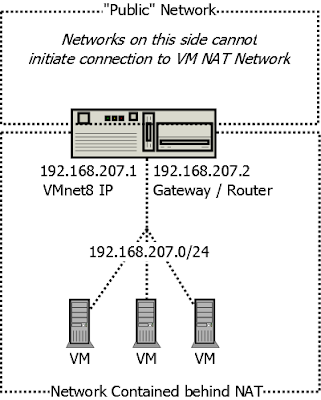

You

need to provide the IP address of the interface that the IPsec

connections will arrive on. If your IPsec server is behind a NAT (as in the diagram and example config), then

this will be your private network IP and

not the public IP.

The leftnexthop is the nexthop address for your openswan server. Typically you can determine that as such:

# route -n

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.168.1 0.0.0.0 UG 0 0 0 eth0

192.168.168.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

In the above example, the gateway for the default network 0.0.0.0 is 192.168.168.1, which is the nexthop IP we are after.

If

you put your server behind a NAT then you need to open UDP ports 500

and 4500 on your modem and pinhole

those ports directly to the internal Openswan server IP (that you specified as "left").

This is a function frequently supported on even the most basic home

routers.

Openswan - Special Notes

type=transport

In

IPsec configurations, normally you want "tunnel" over transport, but

this is one of the rare times that "transport" is necessary.

rightca=%same

That's

the same CA as the left (server) side. You specify this to indicate

that only certificates signed by your IPsec CA are valid. You don't want

anyone with a signed certificate from anywhere to get access!

L2TP / PPP

HowTo documents often recommend setting up /etc/xl2tpd/l2tp-secrets but this did not

seem to work for me. In the end I needed to configure the

/etc/ppp/chap-secrets file.

# cat /etc/ppp/chap-secrets

# Secrets for authentication using CHAP

# client server secret IP addresses

# * * password 192.168.168.30

ipsec-user ipsec-server.example.com password 192.168.168.0/24

The

beauty of this is that all four settings are needed, nothing is wide

open. The username "ipsec-user" and the password "password" are filled

in on the Windows client in the initial connection dialogue box. The "IP

addresses" is the server local range.

# cat /etc/xl2tpd/xl2tpd.conf

; Output trimmed, generally

[global] ; Global parameters:

port = 1701 ; * Bind to port 1701

access control = no ; * Refuse conn without IP match

[lns default] ; Our fallthrough LNS definition

exclusive = yes ; * Permit one tunnel per host

ip range = 192.168.168.100-192.168.168.105 ; Allocate

hidden bit = no ; * Use hidden AVP's?

local ip = 192.168.168.24 ; * Our local IP to use

length bit = yes ; * Use length bit in payload?

require chap = yes ; * Require CHAP auth. by peer

refuse pap = yes ; * Refuse PAP authentication

name = ipsec-server.example.com ; * Use as hostname

ppp debug = no ; * Turn on PPP debugging

pppoptfile = /etc/ppp/options.l2tpd.lns ; * ppp options

You

need to take note of the "ip range", "local ip" and "name". In this example, the "ip range" comes from the same local IP network as the IPsec

listening interface is on. This was selected as a matter of routing

convenience.

# cat /etc/ppp/options.l2tpd.lns

lock

noauth

#debug

dump

logfd 2

logfile /var/log/xl2tpd.log

mtu 1400

mru 1400

ms-dns 192.168.168.1

lcp-echo-failure 12

lcp-echo-interval 5

require-mschap-v2

nomppe

If

you have a local router then ms-dns is going to your local router if

not then try whatever DNS address you normally use in your network.

Openssl - Config

# ls -la /usr/lib/ssl/

lrwxrwxrwx 1 root root 20 Feb 1 23:16 openssl.cnf -> /etc/ssl/openssl.cnf

countryName_default = US

stateOrProvinceName_default = Oklahoma

0.organizationName_default = My Industries

Openssl - Server CA

Create a 10 year certificate authority (CA) specifically for IPsec.

# cd /etc/ipsec.d

# openssl req -x509 -newkey rsa:2048 -keyout private/caKey-ipsec-server.pem -out cacerts/caCert-ipsec-server.pem -days 3650

Openssl - Server Certificate

Now that we have a CA, we need to

generate server certificates and have them signed by that CA. This

sounds like two steps when one would do, but you may need to revoke your

server certificate one day.

# openssl ca -in /etc/ipsec.d/private/ipsec-serverReq.pem -days 3650 -out /etc/ipsec.d/private/ipsec-serverCert.pem -notext -cert /etc/ipsec.d/cacerts/caCert-ipsec-server.pem -keyfile /etc/ipsec.d/private/caKey-ipsec-server.pem

# openssl rsa -in /etc/ipsec.d/private/ipsec-serverKey.pem -out ipsec-serverKey-rsa.pem

Openssl - Server CRL

Generate a Certificate Revocation List (CRL),

which at this stage will have no revoked certificates. Later on, you

will see how to easily revoke a signed certificate and add that

certificate to this CRL.

# openssl ca -gencrl -keyfile /etc/ipsec.d/private/caKey-ipsec-server.pem -cert /etc/ipsec.d/cacerts/caCert-ipsec-server.pem -out /etc/ipsec.d/crls/ipsec-server.crl

Openssl - SSL Client Certificates

The easiest method (in my opinion) is

to issue all certificates from the Openswan server itself. I created a

script (

ipsec-cert-management.sh) to step through the certificate generation

steps because it is unnecessarily painful.

When you run

this script (

ipsec-cert-management.sh), you'll be prompted for a series of

passwords. One password will be for the CA's private key password, the

other will be for the new private client key you are creating.

You should create a password for your client key. When you import

the key into your Windows OS, you have to enter the password once, but

other people will not be able to export and use the key elsewhere unless

they know the password. In other words, there is little overhead in

using a passworded key, but putting a password on the key adds an extra

layer of difficulty for anyone trying to steal your private key from the

local system.

Below, watch for my comments:

# New PK password

# CA password

# Enter

- Where "New PK password" is for the new client key that you are generating.

- Where "CA password" is the password for the CA private key"

- Where "Enter" means do nothing, just hit the enter key.

Here we go:

# ./ipsec-cert-management.sh testguy

Generating a 2048 bit RSA private key

..................................+++

............................+++

writing new private key to '/etc/ipsec.d/clientcerts/testguy/testguyKey.pem'

Enter PEM pass phrase: # New PK password

Verifying - Enter PEM pass phrase: # New PK password

-----

You are about to be asked to enter information that will be incorporated

into your certificate request.

What you are about to enter is what is called a Distinguished Name or a DN.

There are quite a few fields but you can leave some blank

For some fields there will be a default value,

If you enter '.', the field will be left blank.

-----

Country Name (2 letter code) [US]:

State or Province Name (full name) [Oklahoma]:

Locality Name (eg, city) []:

Organization Name (eg, company) [My Industries]:

Organizational Unit Name (eg, section) []:

Common Name (e.g. server FQDN or YOUR name) []:testguy # Recommend using the client hostname

Email Address []:

Please enter the following 'extra' attributes

to be sent with your certificate request

A challenge password []: # Enter

An optional company name []: # Enter

Using configuration from /usr/lib/ssl/openssl.cnf

Enter pass phrase for /etc/ipsec.d/private/caKey-ipsec-server.pem: # CA password

Check that the request matches the signature

Signature ok

Certificate Details:

Serial Number: 13 (0xd)

Validity

Not Before: Mar 23 20:43:19 2014 GMT

Not After : Mar 20 20:43:19 2024 GMT

Subject:

countryName = US

stateOrProvinceName = Oklahoma

organizationName = My Industries

commonName= testguy

X509v3 extensions:

X509v3 Basic Constraints:

CA:FALSE

Netscape Comment:

OpenSSL Generated Certificate

X509v3 Subject Key Identifier:

X509v3 Authority Key Identifier:

keyid:

Certificate is to be certified until Mar 20 20:43:19 2024 GMT (3650 days)

Sign the certificate? [y/n]:y

1 out of 1 certificate requests certified, commit? [y/n]y

Write out database with 1 new entries

Data Base Updated

Enter pass phrase for /etc/ipsec.d/clientcerts/testguy/testguyKey.pem:# New PK password

Enter Export Password: # New PK password

Verifying - Enter Export Password: # New PK password

Import /etc/ipsec.d/clientcerts/testguy/testguyKey.p12 to your Windows client.

total 24

drwxr-xr-x 2 root root 4096 Mar 23 21:42 .

drwxr-xr-x 3 root root 4096 Mar 23 21:42 ..

-rw-r--r-- 1 root root 1322 Mar 23 21:43 testguyCert.pem

-rw-r--r-- 1 root root 3526 Mar 23 21:43 testguyKey.p12

-rw-r--r-- 1 root root 1834 Mar 23 21:43 testguyKey.pem

-rw-r--r-- 1 root root 968 Mar 23 21:43 testguyReq.pem

I had written most of the content in the post before

Heartbleed broke

in April 2014. Until that point, I hadn't worried about digging into

certificate revocation. In all reality, as a home user with an IPsec

server running intermittently, I could count myself as particularly

unlucky if someone had not only targeted my server for a

Heartbleed attack

but also managed to extract private keys or passwords; Noting also that

my machine was patched within 24 hours of the announcement of

Heartbleed.

Still, there will always be a need to be able to quickly revoke a

certificate, for example if I thought that my windows machine had been

compromised.

In that context, the script (see end of

post) needed to be enhanced to automate the revocation of any

certificate signed by the IPsec CA. Note that the script cannot revoke a

certificate signed by an external CA. In other words, if you generated a

signed cert with this script then you can revoke the cert with this

script.

Also note that if you sign a client certificate and

subsequently generate a new CA certificate, you can still revoke the

previously signed client certificate using the new CA certificate.

After

revoking the client certificate and restarting IPsec, new connection

attempts with the revoked cert will have this signature in the "ipsec

barf" logs:

May 3 21:43:43 ipsec-server pluto[6922]: "l2tp-X.509"[1] 93.104.122.73 #1: Main mode peer ID is ID_USR_ASN1_DN: 'C=US, ST=Oklahoma, O=My Industries, CN=roadwarrior'

May 3 21:43:43 ipsec-server pluto[6922]: "l2tp-X.509"[1] 93.104.122.73 #1: certificate was revoked on May 03 19:42:27 UTC 2014

May 3 21:43:43 ipsec-server pluto[6922]: "l2tp-X.509"[1] 93.104.122.73 #1: X.509 certificate rejected

May 3 21:43:43 ipsec-server pluto[6922]: "l2tp-X.509"[1] 93.104.122.73 #1: no suitable connection for peer 'C=US, ST=Oklahoma, O=My Industries, CN=roadwarrior'

May 3 21:43:43 ipsec-server pluto[6922]: "l2tp-X.509"[1] 93.104.122.73 #1: sending encrypted notification INVALID_ID_INFORMATION to 93.x.x.x:61421

May 3 21:43:48 ipsec-server pluto[6922]: "l2tp-X.509"[1] 93.104.122.73 #1: Main mode peer ID is ID_USR_ASN1_DN: 'C=US, ST=Oklahoma, O=My Industries, CN=roadwarrior'

You

can't revoke a certificate unless you have it to hand. I'd deleted a

lot of certs during my testing and discovered that the index or

"database" of issued certificates in /etc/ssl/demoCA/index.txt was

populated with many certs that I no longer had. There appears to be no

way to revoke a signed certificate by the serial number alone.

However, as we use the default openssl settings in the script (see end

of post) much of the time, the signed certificates are copied into

/etc/ssl/demoCA/newcerts/ and named by serial number. As a result, we

can revoke the serial number by finding the cert in that directory.

# ls -la /etc/ssl/demoCA/newcerts/

total 64

drwxr-xr-x 2 root root 4096 May 3 22:27 .

drwxr-xr-x 4 root root 4096 May 3 22:27 ..

-rw-r--r-- 1 root root 1744 Mar 17 20:32 01.pem

-rw-r--r-- 1 root root 1724 Mar 17 20:38 02.pem

-rw-r--r-- 1 root root 1724 Mar 17 20:49 03.pem

-rw-r--r-- 1 root root 1342 Mar 17 22:28 04.pem

-rw-r--r-- 1 root root 1342 Mar 17 22:29 05.pem

-rw-r--r-- 1 root root 1322 Mar 17 22:39 06.pem

-rw-r--r-- 1 root root 1342 Mar 17 23:38 07.pem

-rw-r--r-- 1 root root 1322 Mar 18 21:06 08.pem

-rw-r--r-- 1 root root 1367 Mar 18 23:09 09.pem

-rw-r--r-- 1 root root 1330 Mar 23 14:21 0A.pem

-rw-r--r-- 1 root root 1322 Mar 23 21:40 0B.pem

-rw-r--r-- 1 root root 1322 Mar 23 21:42 0C.pem-revoked

-rw-r--r-- 1 root root 1322 Mar 23 21:43 0D.pem

-rw-r--r-- 1 root root 1330 May 3 22:27 0E.pem

# cat /etc/ssl/demoCA/index.txt

V 240314193233Z 01 unknown /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

V 240314193805Z 02 unknown /C=US/ST=Oklahoma/O=My Industries/CN=toshiba

V 240314194925Z 03 unknown /C=US/ST=Oklahoma/O=My Industries/CN=toshiba

V 240314212827Z 04 unknown /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

V 240314212952Z 05 unknown /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

R 240314213921Z 140503193859Z 06 unknown /C=US/ST=Oklahoma/O=My Industries/CN=toshiba

R 240314223758Z 140503194035Z 07 unknown /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

V 240315200605Z 08 unknown /C=US/ST=Oklahoma/O=My Industries/CN=hnzlwin7

R 240320132104Z 140503194227Z 0A unknown /C=US/ST=Oklahoma/O=My Industries/CN=roadwarrior

V 240320204055Z 0B unknown /C=US/ST=Oklahoma/O=My Industries/CN=testguy

R 240320204223Z 140503200045Z 0C unknown /C=US/ST=Oklahoma/O=My Industries/CN=testguy

R 240320204319Z 140503152636Z 0D unknown /C=US/ST=Oklahoma/O=My Industries/CN=testguy

V 240430202749Z 0E unknown /C=US/ST=Oklahoma/O=My Industries/CN=roadwarrior

To verify the loading of the CRL, "ipsec barf" will give you something like this:

000 May 03 21:43:35 2014, revoked certs: 4

000issuer: 'C=US, ST=Oklahoma, O=My Industries, CN=ipsec-server.example.com'

000distPts: 'file:///etc/ipsec.d/crls/ipsec-server.crl'

000updates: this May 03 21:42:30 2014

000 next Jun 02 21:42:30 2014 ok

and this...

May 3 21:43:35 ipsec-server pluto[6922]: Changing to directory '/etc/ipsec.d/crls'

May 3 21:43:35 ipsec-server pluto[6922]: loaded crl file 'ipsec-server.crl' (759 bytes)

May 3 21:43:35 ipsec-server pluto[6922]: loading certificate from /etc/ipsec.d/certs/ipsec-serverCert.pem

May 3 21:43:35 ipsec-server pluto[6922]: loaded host cert file '/etc/ipsec.d/certs/ipsec-serverCert.pem' (1342 bytes)

The output of the

ipsec-cert-management.sh script (when revoking) will look something like this:

# ./ipsec-cert-management.sh /etc/ssl/demoCA/newcerts/02.pem

/etc/ssl/demoCA/newcerts/02.pem exists. Do you want to revoke this Certificate? [y/N]: y

Using configuration from /usr/lib/ssl/openssl.cnf

Enter pass phrase for /etc/ipsec.d/private/caKey-ipsec-server.pem:

Revoking Certificate 02.

Data Base Updated

Using configuration from /usr/lib/ssl/openssl.cnf

Enter pass phrase for /etc/ipsec.d/private/caKey-ipsec-server.pem:

Certificate Revocation List (CRL):

Version 2 (0x1)

Signature Algorithm: sha1WithRSAEncryption

Issuer: /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

Last Update: May 3 22:21:19 2014 GMT

Next Update: Jun 2 22:21:19 2014 GMT

CRL extensions:

X509v3 CRL Number:

13

Revoked Certificates:

Serial Number: 02

Revocation Date: May 3 22:21:15 2014 GMT

Serial Number: 06

Revocation Date: May 3 19:38:59 2014 GMT

Serial Number: 07

Revocation Date: May 3 19:40:35 2014 GMT

Serial Number: 09

Revocation Date: May 3 19:46:03 2014 GMT

Serial Number: 0A

Revocation Date: May 3 19:42:27 2014 GMT

Serial Number: 0C

Revocation Date: May 3 20:00:45 2014 GMT

Serial Number: 0D

Revocation Date: May 3 15:26:36 2014 GMT

Serial Number: 0F

Revocation Date: May 3 22:00:11 2014 GMT

Serial Number: 10

Revocation Date: May 3 22:02:53 2014 GMT

Signature Algorithm: sha1WithRSAEncryption

Done, check for errors above. YOU MUST RESTART IPSEC!

-rw-r--r-- 1 root root 1724 Mar 17 20:38 /etc/ssl/demoCA/newcerts/02.pem-revoked

My advice is to make sure you have (R)evoked anything you aren't sure about. At the bare minumum you will have one (V)alid certificate for your server and one (V)alid certificate for an IPsec client.

# cat /etc/ssl/demoCA/index.txt

R 240314193233Z 140503223446Z 01 unknown /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

R 240314193805Z 140503222115Z 02 unknown /C=US/ST=Oklahoma/O=My Industries/CN=toshiba

R 240314194925Z 140503223056Z 03 unknown /C=US/ST=Oklahoma/O=My Industries/CN=toshiba

R 240314212827Z 140503223459Z 04 unknown /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

V 240314212952Z 05 unknown /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

R 240314213921Z 140503193859Z 06 unknown /C=US/ST=Oklahoma/O=My Industries/CN=toshiba

R 240314223758Z 140503194035Z 07 unknown /C=US/ST=Oklahoma/O=My Industries/CN=ipsec-server.example.com

R 240315200605Z 140503223122Z 08 unknown /C=US/ST=Oklahoma/O=My Industries/CN=hnzlwin7

R 240315220911Z 140503194603Z 09 unknown /C=US/ST=Oklahoma/O=My Industries/CN=hnzlwin7/emailAddress=hnzlwin7@hnzl.de

R 240320132104Z 140503194227Z 0A unknown /C=US/ST=Oklahoma/O=My Industries/CN=roadwarrior

R 240320204055Z 140503223138Z 0B unknown /C=US/ST=Oklahoma/O=My Industries/CN=testguy

R 240320204223Z 140503200045Z 0C unknown /C=US/ST=Oklahoma/O=My Industries/CN=testguy

R 240320204319Z 140503152636Z 0D unknown /C=US/ST=Oklahoma/O=My Industries/CN=testguy

V 240430202749Z 0E unknown /C=US/ST=Oklahoma/O=My Industries/CN=roadwarrior

R 240430215836Z 140503220011Z 0F unknown /C=US/ST=Oklahoma/O=My Industries/CN=nogood

R 240430220142Z 140503220253Z 10 unknown /C=US/ST=Oklahoma/O=My Industries/CN=nogoodagain

With this

ipsec-cert-management.sh script, it's easy to add and revoke a certificate, so there's no excuse to compromise security on the grounds of complexity.

References

- Using a Linux L2TP/IPsec VPN server with Windows Vista

- Github: https://github.com/mofftech/Server-Automation/